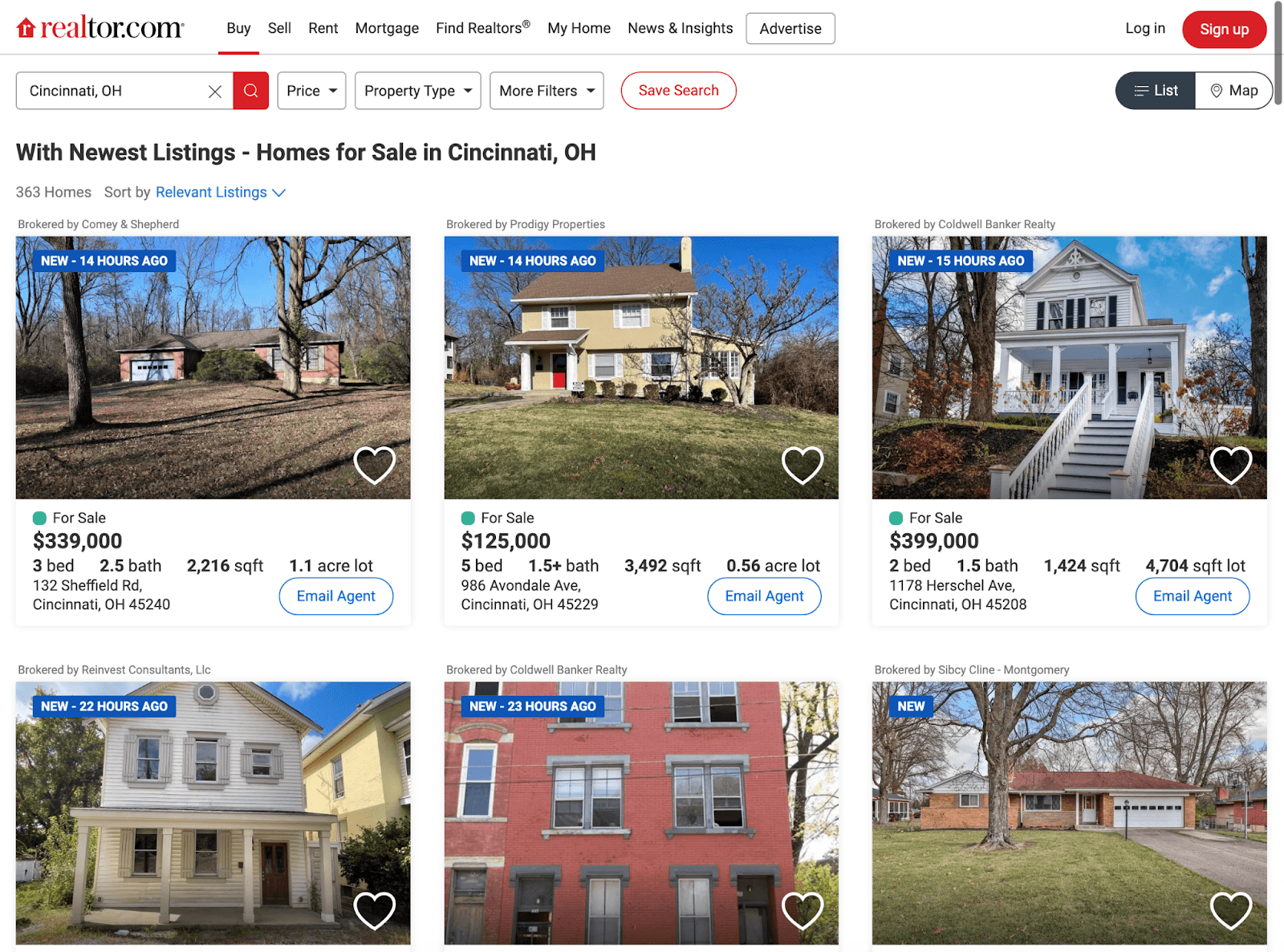

Over 5.64 million home listings are available at any given time across the US. Many of those listings are on Realtor.com and being competed for by thousands and thousands of real estate agents. Realtor.com receives over 60 million unique visitors each month, which means there's tons of data you could pull from to grow your own business. How can you gain an advantage with this insane level of competition?...learn about how to scrape data from Realtor.com.

This article will show you how to find hidden web data and extract precious nuggets from property pages on Realtor.com. Imagine having at your fingertips up-to-the-minute insights about properties in specific areas or tracking price changes using RSS feeds!

You may wonder if such an adventure comes with challenges – it does. Ever encountered bot detection walls while trying to scrape data? Or maybe captchas stopping you dead in your tracks?

Why Scrape Realtor.com?

As the largest real estate platform in America, Realtor.com provides a plethora of data for those seeking property-related information. But accessing it is not so simple, which is where web scraping comes into play.

Web scraping can be utilized to access the vast trove of data held by Realtor.com, enabling users to gain a competitive edge through understanding trends and unlocking hidden insights in the real estate market.

Specifically, to scraping realtor.com allows you to unlock powerful insights and understand trends within the real estate market that can provide an edge over competitors. The gathered data not only gives access to basic details like property prices or addresses but also reveals hidden patterns about selling properties which could be vital for your business strategy. Whether you're looking for number of homes sold in an area, average price per square foot, or something else, Realtor.com can give you a sense of what's selling right now.

If you tried to do this on your own or paid someone else to do it, these are the least effective methods to accomplish your goals. Real estate data scraping can save up to 45% of data collection costs, so it's an obvious choice to find a tool that can help you get this done quickly and accurately.

Tapping Into Hidden Insights Through Data Parsing

Scraped raw data can sometimes appear messy or unstructured. That's why we employ processes known commonly among developers by terms such 'data parsing' or 'writing code'. By implementing these procedures after extraction, our aim is always making sure that information harvested from platforms like Realtor ends up clear and easy-to-understand.

From market trends to customer preferences, the data we can scrape from Realtor.com holds immense value. It’s like having a magnifying glass over the real estate landscape and being able to see patterns that were previously invisible.

2 Methods for Scraping Realtor.com

Scraping data from real estate sites such as Realtor.com can be intimidating, yet Python's straightforward syntax makes it simpler to accomplish. In particular, the libraries httpx and parsel are powerful tools in any web scraper's arsenal.

1. Use Python For Web Scraping (coding required)

Given its combination of power and ease-of-use, Python is a popular language among developers for web scraping projects. This makes it an excellent choice when you need to scrape realtor or other similar sites. Using Python packages such as httpx allows us to make HTTP requests easier while parsel lets us parse HTML documents effortlessly.

Httpx provides asynchronous capabilities which means we can handle multiple tasks at once - perfect when dealing with large volumes of property listings on Realtor.com that require quick data extraction. Parsel, on the other hand, enables easy navigation through complex website structures using CSS selectors.

Moreover, if you want to start writing code without worrying about handling sessions or cookies manually, these libraries got your back. Httpx will automatically manage them whereas parsel aids in extracting elements based on their attributes (like 'data-testid') making scraping API responses less cumbersome.

But remember: web scraping requires respect for target URLs' rules and laws surrounding data privacy, so always ask before taking.

Step-by-Step Guide to Scraping Property Data from Realtor.com Using Python

Here's a practical guide on how you can start scraping this treasure trove of information.

Extracting Hidden Web Data

The first step is to get the property URLs. You can do this by typing in your desired location into the search bar and then extracting all the resulting property links using CSS selectors. However, note that Realtor.com uses data-testid attributes for bot detection so be careful not to trigger any red flags.

Next up is parsing these URLs. The goal here is to retrieve hidden web data embedded within each page’s HTML document--think of it as hunting for invisible nuggets of info. This might seem daunting at first but with some Python knowledge under your belt (and perhaps an introductory tutorial on Python web scraping) you'll soon master this task.

In order to extract useful pieces such as property price or address, make sure you identify correct tags or classes used by the website developer tools will come handy here.

Avoid Being Blocked

Web scraping may sound magical so far but there's one muggle issue we need to tackle: blocking challenges posed by proxy servers. Luckily, rotating proxies provide a reliable fix--they mask your IP address making you appear more like a human than a scraper bot.

You're now ready for action. Remember, practice makes perfect when learning how to scrape realtor com successfully and always respect the website's terms of use.

Method #2: Use Magical to Scrape (easy method)

Visualize the capability to traverse through a plethora of real estate postings on Realtor.com, one of America's most expansive property listing websites, and having the capacity to extract applicable data. This is no wizardry but practical automation at work.

To begin with, we need to use the search bar effectively. By inputting a city or area name into the search bar on Realtor.com, you can access thousands of properties in that region with ease. It's like casting a wide net over your target location.

The Magic Behind Property URLs

Every property listed has its unique URL containing valuable data about the property—its price, address, and more. Think of these as hidden keys waiting to be discovered. The challenge here lies not just in extracting this data but also making sure our scraper remains undetected by bot detection systems.

But worry not; every problem has a solution. To bypass such challenges while scraping realtor.com efficiently requires something akin to an invisibility cloak - proxy rotation.

Navigating Through Proxy Servers

Proxy servers act as intermediaries between your computer (the client) and Realtor.com (the server). Using multiple proxies allows us change our IP addresses periodically thus giving us that much-needed invisibility from bot detectors when we start scraping.

While all this might seem complex initially, remember even learning Wingardium Leviosa wasn't easy at first. But once mastered—it opens up powerful possibilities enabling us to analyze the real estate market better and make informed decisions.

Tracking Property Changes on Realtor.com using RSS Feeds

If you're a keen observer of the real estate market, then tracking property changes can be a crucial part of your strategy. Luckily, Realtor.com - one of the largest US real estate listing websites - provides RSS feeds to facilitate tracking property changes.

RSS feeds are like continuous updates for specific categories. You get immediate notifications about price changes, open house events, sold properties, and new listings as they happen - straight to your inbox or feed reader.

Understanding Price Changes with RSS Feeds

The Price Change Feed is particularly useful for keeping an eye on fluctuations in property prices. It helps you monitor the ups and downs without having to manually check each listing every day. This can give you an idea of when it might be advantageous to purchase or sell real estate according to pricing movements.

You can access these feeds by visiting their respective links:

Price Change Feed

Open House Feed

Sold Property Feed

New Property Feed

Tracking changes via these RSS feeds will not only save time but also allow more efficient analysis of the ever-changing real estate market landscape. So, if you're serious about keeping up with real estate trends or simply tracking a few properties of interest, these RSS feeds are an essential tool to add to your arsenal.

Overcoming Blocking Challenges When Scraping Realtor.com with ScrapFly API

Web scraping is a powerful tool, but it's not without its challenges. One of the most significant obstacles you might encounter when attempting to scrape realtor.com is bypass blocking and bot detection mechanisms. However, there's a reliable solution at hand - using an efficient service like ScrapFly API.

What makes this so effective? Well, for starters, it employs proxy rotation techniques that make your scraper seem less like a bot and more like multiple genuine users accessing data from different locations.

The Magic of Proxy Rotation

In layman’s terms, proxies are intermediary servers separating end users from the websites they browse. They can provide varying levels of privacy, security, and functionality based on use case needs.

When you use proxy rotation in web scraping tasks such as extracting real estate listings or property prices off sites like realtor.com - it essentially means that each request sent to the website comes from a new IP address. This is a good way to ensure you get the maximum amount of data you need without running into limits.

Solving Captchas with ScrapFly API

Captcha systems serve as another barrier for scrapers on many websites including realtor.com; their purpose being to differentiate between human users and bots. But don't worry. The same ScrapFly API which helps us handle proxy rotations also has solutions for captchas so you don't have to enter these manually every single time you pull data.

In my personal experience with using these tools while working on various projects related to data extraction from major platforms (including one where I had to scrape property URLs), I've found them exceptionally helpful in overcoming these hurdles.

So, if you're planning to start scraping realtor.com for your next big data project, consider leveraging the powerful features of ScrapFly API. It can drastically streamline the procedure and render it more productive.

A Final Word

When you learn how to scrape Realtor.com, you will have a considerable advantage over your competitors. Competition in the real estate market is already tough, so any advantage you get is a welcome one.

Plus you can use the AI tool Magical to help you store your data and ultimately save time. Just download it for your Chrome browser here (it's free).